As much as I’d prefer to be working in F#, I’ve found myself doing quite a bit of JavaScript lately. I’m hardly a JavaScript expert but I like to think my skills are above average (although that could merely be wishful thinking). I won’t deny that JavaScript has its problems but I think that it has traditionally been maligned by things that aren’t necessarily the language’s fault; for instance, DOM inconsistencies are hardly under JavaScript’s control. Generally speaking, I really do enjoy working with JavaScript. That said, there are things such as prototypal inheritance that I always struggle with and I know I’m definitely not alone. That’s why I decided to take a look at TypeScript now that it has finally earned a 1.0 moniker.

As much as I’d prefer to be working in F#, I’ve found myself doing quite a bit of JavaScript lately. I’m hardly a JavaScript expert but I like to think my skills are above average (although that could merely be wishful thinking). I won’t deny that JavaScript has its problems but I think that it has traditionally been maligned by things that aren’t necessarily the language’s fault; for instance, DOM inconsistencies are hardly under JavaScript’s control. Generally speaking, I really do enjoy working with JavaScript. That said, there are things such as prototypal inheritance that I always struggle with and I know I’m definitely not alone. That’s why I decided to take a look at TypeScript now that it has finally earned a 1.0 moniker.

By introducing arrow function expressions, static typing, and class/interface based object-oriented programming, TypeScript brings sanity to JavaScript’s more troublesome areas and gently guides us to writing manageable code using familiar paradigms. Indeed, writing TypeScript feels almost the same as writing C# (with a dash of Java) which is hardly a surprise given that its lead architect is Anders Hejslberg.

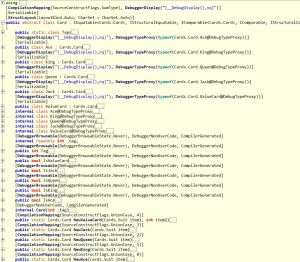

If you’re currently working primarily with the Microsoft stack, adding TypeScript to your environment is easy. Because TypeScript is a proper superset of JavaScript all of your existing JavaScript is already valid TypeScript. What’s more is that while the TypeScript compiler emits JavaScript, it also produces a .map file which allows you to debug using the original TypeScript source!

In this article, I’ll introduce a number of what I think are TypeScript’s most important features giving a brief overview of each. This isn’t intended to be a comprehensive survey of the language but hopefully it will give you enough information to whet your appetite.

Functional Goodness

As a functional guy, I strongly believe that JavaScript is at its best when its functional nature is embraced. TypeScript includes a few features that make functional programming more accessible.

Arrow Function Expressions

Arrow function expressions are hardly the most important aspect of TypeScript but I like them so much and will be using them throughout this article so I thought it best to describe them up front.

One of the things that bothers me about JavaScript is its lack of lambda expressions. JavaScript’s anonymous functions certainly serve the same purpose but oftentimes they introduce a lot of noise that can obscure the function’s logic. Consider a simple anonymous function for calculating the area of a circle:

function (radius) { return Math.PI * Math.pow(radius, 2); };

In this example, only about 60% of the code is actually what I’d consider useful; the rest is syntactic noise. Toss this form into a call to a higher-order function and the logic is further obfuscated. This has been especially bothersome since I’ve gotten so used to lambda expressions in C# and F#. ECMAScript 6 has a proposed arrow syntax that closely resembles C#’s lambda expressions but ECMAScript 6 adoption is a long way off. Fortunately, with TypeScript you can use it today. In TypeScript, you could redefine the above function like this:

radius => Math.PI * Math.pow(radius, 2);

The arrow function syntax is equivalent to the traditional syntax (the compiler actually expands it to the traditional syntax) but the amount of useful code is closer to 90% – a significant improvement!

Optional and Default Parameters

One aspect of JavaScript that I consider to be both a blessing and a curse is its flexibility with function parameters. On one hand, it’s nice to be able to pass any number of values to a function and access them via the arguments pseudo-array. On the other hand, if I’ve taken the time to explicitly list each parameter, I should reasonably expect that a value will be supplied for each yet JavaScript offers no such guarantee. TypeScript enforces that the proper number of arguments are supplied. Let’s look at a simple function for formatting a typical American name:

function formatName(first : string, last : string, middle : string) {

return last + ", " + first + " " + middle;

}

Unfortunately for our function, not everyone has a middle name so if we try to invoke the function but supply values for only first and last, the compiler will warn that it cannot select an overload. To work around this, we can define middle as either an optional parameter or a default parameter.

To make middle an optional parameter, we simply need to append a question mark to the parameter name. We can then handle the parameter appropriately within the function body as shown:

function formatName(first : string, last : string, middle? : string) {

return last + ", " + first + (middle ? " " + middle : "");

}

Alternatively, to make middle a default parameter we simply need to replace the type annotation with an assignment as follows:

function formatName(first : string, last : string, middle = "") {

return last + ", " + first + " " + middle;

}

The compiler infers the proper type from the assignment and we’re guaranteed to have a value (unless someone passes null, of course).

Function Overloading

Optional and default parameters are nice for simple scenarios but for more complex scenarios we can turn to function overloading. Because JavaScript doesn’t support overloading, TypeScript’s overloading capabilities are rather limited and are quite different from what you might expect.

TypeScript’s function overloads have two parts, the overload signatures, and the implementation. The caveat is that the compiler must be able to resolve each overload signature to the implementation signature. Interestingly, each overload signature can resolve to an empty signature thus, I’ve (so far) found it most convenient to provide the implementation as a parameterless function and resort to the traditional JavaScript approach of using the arguments pseudo-array to determine what was supplied based on the overload signatures. To see this in action, let’s look at an overload approach to the simple name formatting function we saw in the previous section:

function formatName(first : string, last : string);

function formatName(first : string, last : string, middle : string);

function formatName() {

var first = arguments[0];

var last = arguments[1];

var middle = arguments.length > 2 ? arguments[2] : undefined;

return first + ", " + last + (middle ? " " + middle : "");

}

By virtue of the overload signatures, we can invoke the formatName function with either 2 or 3 arguments. The implementation knows to expect both the first and last names so it assigns them to names unconditionally. The implementation also knows that it may or may not receive a middle name so it conditionally sets that value before concatenating and returning the values.

Built-in Types

With a name like “TypeScript” you probably correctly guessed that the type system plays a significant role within the language. TypeScript shares a number of common types with JavaScript. For the purposes of this article, I’ll assume you’re already familiar with the common types such as Boolean, Number, String, Null, and Undefined and focus on what TypeScript adds.

Any

JavaScript is a dynamically typed language. Although dynamic typing is convenient for simple applications, it often leads to confusion and fragility in more complex systems. TypeScript deviates from JavaScript by using static typing.

The TypeScript language reference states that static typing is optional but this seems a bit misleading. In TypeScript, all types derive from the Any type thus, every type is compatible with Any. In this sense, the Any type is much like Object in .NET or Java as shown next:

var x: any = 10; x = "a"; x = true;

Here we’ve defined the variable x to be of type Any by including a type annotation in the definition and setting its initial value to 10. We then change the value to a string before finally settling on a Boolean.

Where Any differs from Object (and where I think the statement about optional static typing originates, though that’s pure speculation) is that a value of type Any can be assigned to any other type. The following code shows this principle in action:

var x: number = 10; var y: any = "a"; x = y;

In this example we define two variables, x and y. Here, x is explicitly defined as Number while y is defined as Any. On the final line, we assign y to x and the TypeScript compiler happily complies despite the differing types.

Void

The Void type is similar to the void type in C# in that it is generally used as a function’s return type to indicate the function has an effect as Void indicates no particular value. Where TypeScript’s Void type differs from that of other languages is that Void is also the base type for both null and undefined (null is also a subtype of every type other than undefined) so it’s technically possible to define a variable of type Void but doing so is rather pointless.

Enumerations

TypeScript includes first-class support for enumerations through C#-like enum declarations.

Defining

TypeScript’s enumerations let you associate a series of labels to known numeric values thus avoiding “magic numbers.” A nice thing about TypeScript enumerations is that because they’re subtypes of Number, they can be used interchangeably with Number but the TypeScript compiler protects against assigning the wrong enumeration type. Here’s an example of a simple enumeration that defines some shapes:

enum Shapes {

None,

Circle,

Triangle,

Rectangle

}

The previous sample follows the default behavior of implicitly assigning zero-based sequential values to the labels such that None is 0, Circle, is 1, and so on. Just as in C#, you can override this default behavior by explicitly providing values inline as follows:

enum Shapes {

None = 0,

Circle = 10,

Triangle = 20,

Rectangle = 30

}

Now, instead of being automatically associated with a value, each label is explicitly assigned. Should any label not have an explicitly associated value, the default sequential implicit association will be used.

Despite their syntactic similarities with C#, TypeScript enumerations are actually quite different constructs. The way a TypeScript enumeration is represented in JavaScript is fascinating and highlights how flexible JavaScript truly is. Here’s the JavaScript representation of the Shapes enumeration using the default (generated) values:

var Shapes;

(function (Shapes) {

Shapes[Shapes["None"] = 0] = "None";

Shapes[Shapes["Circle"] = 1] = "Circle";

Shapes[Shapes["Triangle"] = 2] = "Triangle";

Shapes[Shapes["Rectangle"] = 3] = "Rectangle";

})(Shapes || (Shapes = {}));

The first time I saw this code it took me a little while to understand what it was doing but it’s actually quite simple once you recognize that it relies heavily on the fact that the assignment operator returns a value. At its heart, initializing the enumeration involves an immediate function which dynamically creates two properties for each named value. The first property is named for the case it represents and is assigned the case value (Shapes[“None”] = 0). The second property is named for the case value (via the nested assignment) and is assigned the case name (Shapes[Shapes[“None”] = 0] = “None”). These dual properties establish a sort of bi-directional relationship allows you to fairly easily retrieve either the name or value via the property indexer syntax. For instance, if you wanted to get the name “Circle” you’d write this:

Shapes[Shapes.Circle]

Computed Members

When the case values are known constants (either explicitly supplied or implicitly generated), the members are said to be constant members. Where TypeScript enumerations get really interesting is that they can exploit the underlying JavaScript’s dynamic nature such that the explicitly associated values don’t need to be constants! Instead, each case can be based on a computation so long as that computation results in a number. For instance, we could define a Circles enumeration with each label associated with different computed areas like this:

var getArea = r => Math.PI * Math.pow(r, 2);

enum Circles {

Small = getArea(1),

Medium = getArea(5),

Large = getArea(10)

}

The TypeScript compiler behaves differently when resolving computed members than it does when resolving constant members. With constant members, the compiler replaces the member reference with the constant value. Conversely, computed members are left as member references.

Type Annotations and Inference

Like any language with static typing, you need a way to inform the compiler what type to expect. TypeScript achieves this through F#-like type annotations on variable declarations as shown next:

var x : number = 10; var y : string = "ten";

Here we’ve defined two variables, x and y, and annotated them to be of types number and string, respectively. Constantly telling the compiler what type to expect gets pretty tedious (it’s one of my main gripes about C#) but fortunately, TypeScript includes a rather robust type inference system which often makes explicit annotations unnecessary. Here are the same definitions without the type annotations:

var x = 10; var y = "ten";

While these may look like traditional JavaScript definitions, they are in fact strongly typed. What’s happened is that the compiler inferred Number and String from the respective values as evidenced by hovering over the name in Visual Studio to reveal the appropriate type names.

TypeScript’s type inference can also work contextually on function parameters as shown in this example adapted from the TypeScript handbook:

window.onmousedown = e => console.log(e.button);

The TypeScript compiler knows about onmousedown so it is able to infer that e is of type MouseEvent. Interestingly, despite TypeScript’s typically bottom-up approach to type inference, function parameters are inferred to be of type Any. Let’s take another look at the getArea function introduced in the earlier Circles example, to see this in action (note that this example uses another TypeScript feature, the arrow function; we’ll take a closer look at arrow functions in the Functional Goodness section a bit later):

var getArea = r => Math.PI * Math.pow(r, 2);

If we were to hover over getArea in Visual Studio, we’d see that it’s signature is (r: any) => number which indicates that the sole parameter, r, is of type Any and getArea’s return value is Number. Unfortunately, TypeScript’s type inference capabilities aren’t quite as strong as F#’s so despite Math.pow requiring a Number, r is still inferred to be Any. To improve getArea’s type safety we should revise it to identify the parameter type through an annotation like this:

var getArea = (r : number) => Math.PI * Math.pow(r, 2);

Now Visual Studio will correctly report the function’s type as (r: number) => number.

Classes

JavaScript has always been an object-based language. The problem with being object-based rather than object-oriented is that developers tend to want to treat it as an object-oriented language but get frustrated when core OO concepts like inheritance and encapsulation don’t work the same way as they do in more traditional OO languages. The name JavaScript has never helped distinguish it, either. It’s often not that these things can’t be done in JavaScript or even that they’re difficult, it’s just that JavaScript’s approach is so foreign that developers often find them confusing.

Much of that confusion stems from the fact that objects aren’t really objects in the way that traditional OO developers think of them. Instead, JavaScript objects are dictionaries (or hashes, if you prefer) with some additional syntactic support. Sure, we can define functions that serve as constructors, nest values, and even reference something in an instance via the this identifier but all we’re really doing is defining a series of key/value pairs. This is especially apparent when using object literals.

TypeScript addresses disconnect this through formal classes that somewhat resemble C# classes. For instance, here’s a simple class that represents a circle:

class Circle {

radius : number;

constructor(radius : number) {

this.radius = radius;

}

getArea() {

return Math.PI * Math.pow(this.radius, 2);

}

}

The traditional OO conventions should be pretty easy to spot in the Circle class. First, we have a field named radius, next is a constructor which accepts the radius, and finally there’s a method called getArea.

Aside from following a more conventional approach, TypeScript classes ensure that the JavaScript definitions are properly established on the object. For example, the getArea method is associated with Circle’s prototype rather than Circle itself thus facilitating reuse and inheritance.

Access Control

In TypeScript, class members are public by default but can be made private by including the private access modifier. It’s important to note though, that this access control does not extend beyond TypeScript’s boundaries into the compiled JavaScript;. Because each member is directly associated with the object, anything defined as private will still be a property of the object and thus, accessible via JavaScript. It’s also important to note that TypeScript has no concept of protected members.

Accessors (Properties)

Fields will often be sufficient for associating data with an object but should you require more granular control over how values are accessed or modified, you can use the get and set accessors in conjunction with a backing field. Let’s revise our Circle class to use this approach:

class Circle {

private _radius: number;

constructor(radius: number) {

this._radius = radius;

}

get radius() { return this._radius; }

set radius(value) { this._radius = value; }

getArea() {

return Math.PI * Math.pow(this._radius, 2);

}

}

Now the Circle class has a private _radius field. Access to that field is controlled through the get and set radius functions. If we wanted to make the radius value read-only then we could omit the set function but this will still control access to the _radius value within the confines of TypeScript.

Methods

Like classes in more traditional OO languages, TypeScript classes support methods. TypeScript methods are just regular functions with a streamlined syntax. One notable aspect of defining functions as methods as opposed to fields is that the compiler attaches methods to the object’s prototype rather than an individual instance which plays more nicely with JavaScript inheritance.

Since methods are just functions, it’s possible to overload them in the same manner as a function.

Constructors

TypeScript classes are initialized through constructor functions. You can either define your own constructor function or rely on the default constructor functions provided by the compiler. We’ve already seen an example of a TypeScript constructor so I won’t repeat it here.

Automatic Constructors

If you don’t define a constructor function, the compiler will generate one automatically for you. In many cases, the automatic constructor function will have no parameters and perform no initialization actions beyond member variable initialization. However, if the class is derived from another class, the automatic constructor will accept the same parameters as the base class’ constructor and pass the arguments on to the base class before initializing member variables.

Custom Constructors

To create a custom constructor function we simply define a function named “constructor” along with any parameters we want to accept as shown in the Circle class above. Like functions and methods, it’s possible to overload constructors.

Parameter Properties

As a final note about TypeScript constructors, if you specify an access modifier (public or private) for a parameter, that parameter will automatically become a field on the class. This approach is much like F#’s (or C# 6’s) primary constructors. We’ll see an example of this a bit later when we look at interfaces.

Inheritance

As I mentioned at the beginning of the article, prototypal inheritance is something I typically mess up when coding straight JavaScript. It’s not necessarily that prototypal inheritance is hard, it’s just that I typically forget some step. Rather than relying on search engines memory to get it right, TypeScript provides a familiar, Java-like inheritance syntax.

Central to TypeScript’s inheritance model is the extends keyword which allows you to specify the base class as shown in the following sample:

class Shape {

description: string;

constructor (description : string) {

this.description = description;

}

getArea() { return NaN; }

toString() { return this.description; }

}

class Circle extends Shape {

radius : number;

constructor (radius: number) {

super("Circle");

this.radius = radius;

}

getArea() {

return Math.PI * Math.pow(this.radius, 2);

}

toString() {

var area = this.getArea();

return super.toString() + " (" + area.toString() + ")";

}

}

In the preceding code, the Circle class extends the Shape class and overrides both of its methods (note that TypeScript has no concept of abstract members). Because the Circle class has a custom constructor we needed to explicitly invoke Shape’s constructor. Had we used an automatic constructor, the compiler would have handled this detail for us.

Derived classes can access the base class’ public members via the super identifier as shown in the Circle class’ toString override. Because the TypeScript compiler handles the details of ensuring that the correct execution context (this) is used, we could just as easily have foregone overriding toString by including the call to getArea in the Shape class but I thought it would be more useful to see the super identifer in action.

Static Members

Although TypeScript doesn’t include the notion of an static class (modules fill that role as we’ll see a bit later), classes can have static members. To make a member static, simply prefix the definition with the static modifier. The following snippet shows a class with a static method:

class CircleGeometry {

static getArea(circle) {

return Math.PI * Math.pow(circle.radius, 2);

}

}

Interfaces

Interfaces in TypeScript serve much the same purpose as interfaces in traditional OO languages in that they specify a contract to which an object must adhere. It’s important to recognize that TypeScript’s interfaces are purely logical constructs. Interfaces exist only within the TypeScript sandbox to help enforce type safety; you won’t find any traces of them in the compiled JavaScript. That said, I also consider them to be one of TypeScript’s most powerful and compelling features because of how they embrace the underlying JavaScript’s dynamic nature to improve type safety with code not only within your project but from outside as well.

Defining

Interface definition follows the familiar pattern from other traditional OO languages. Here’s how we could define an interface to represent an object that implements a getArea method:

interface Shape {

getArea () : number;

}

The Shape interface defines a single member: a function named “getArea” that accepts no parameters and returns a number. This ensures that whenever we have an instance of something that implements the Shape interface, the interface guarantees that instance will have a getArea function.

Implementing with Classes

Implementing interfaces with classes via the implements keyword is perhaps the most familiar way interfaces are used. For example, we could define a Circle class that implements the Shape interface as follows:

class Circle implements Shape {

constructor(public radius : number) {} // <-- note the public modifier; I told you we'd see it later!

getArea() { return Math.PI * Math.pow(this.radius, 2); }

}

As you can see, defining a class that implements an interface is almost identical to a normal definition – all we had to do was identify the interface via the implements keyword. Should you need to implement multiple interfaces, simply delimit the names with commas.

Type Compatibility

What’s interesting about TypeScript interfaces is that an object’s type compatibility is based on object structure rather than explicit definition. This greatly increases the power of TypeScript’s interfaces because it means that types including object literals or externally defined types can satisfy an interface’s requirements by virtue of having the correct members. Consider a function which simply prints out a shape’s area:

function writeArea (shape : Shape) {

document.write("The shape's area is: " + shape.getArea() + "<br />");

}

Here we’ve constrained the shape parameter to types that implement the Shape interface. While we certainly could pass along an instance of the Circle class we defined in the last section, it’s much more interesting to use an object literal! First, we’ll create the function that creates and returns the literal:

function createCircle (radius : number) {

return { getArea: () => Math.PI * Math.pow(radius, 2) };

}

The createCircle function accepts the radius and returns an object literal with a single member: a getArea function. If we invoke inspect createCircle’s signature, we’ll find that its return type is Any. However, if we invoke the writeArea function, passing the result of createCircle as follows, the compiler is perfectly happy:

writeArea (createCircle(5));

The fact that the object literal includes the getArea function is enough to satisfy the compiler. If we wanted to get more explicit and further prove how the Shape interface is satisfied, we can annotate the createCircle function with a return value (rather than relying on the type inference) like this:

function createCircle (radius : number) : Shape {

return { getArea: () => Math.PI * Math.pow(radius, 2) };

}

Even with the type annotation in place, the compiler recognizes that the object literal meets the requirements defined by the interface. Used like this, object expressions are conceptually similar to F#’s object expressions in that they can be created ad-hoc but still satisfy the type requirements.

Describing Functions

One of my favorite TypeScript features is that interfaces can also represent function signatures! Function signatures can get quite verbose, especially when dealing with generics (something I’m not covering in this article beyond this brief glimpse). To improve maintainability, you might consider leveraging this feature. For now, we’ll stick with a simple example of how you might define an interface that represents a predicate:

interface Predicate<T> {

(item: T): boolean;

}

Using this form we’re not defining which members must be implemented, we’re defining the arguments and return type of a function! Now, rather than repeating the signature everywhere, we can simply use the interface name instead as shown in this filter function:

function filter<T> (source : T[], callback : Predicate<T>) {

var result = [];

for(var i = 0; i < source.length; i++) {

var value = source[i];

if (callback(value)) result[result.length] = value;

}

return result;

}

If we wanted to use this function to filter out all of the odd numbers from an array we could write:

var evenNumbers =

filter(

[1, 1, 2, 3, 5, 8, 13, 21, 34, 55, 89, 144],

i => i % 2 === 0);

Notice how we didn’t need to do anything special with the supplied function – we simply passed it as we would any other argument. The compiler even inferred the correct type parameter for the filter function and, by extension, the Predicate interface!

Modules

Modules provide a convenient mechanism for grouping related code elements while providing some degree of encapsulation and access control. The primary benefit of organizing code in this manner is to avoid polluting the global namespace with miscellaneous types and functions, thus reducing the likelihood of naming conflicts. In many ways they’re very much like modules in F# or static classes in C#. Let’s take a look at how to define a module continuing with our theme of circle geometry:

module CircleGeometry {

export function getArea(radius : number) {

return Math.PI * Math.pow(radius, 2);

}

export function getCircumference(radius : number) {

return 2 * Math.PI * radius;

}

}

The CircleGeometry module defines two functions, getArea and getCircumference. By default, module members are private so in order to access these functions from outside the module we export them using the export keyword. With the module in place, we’re free to invoke its functions with a qualified name like this:

CircleGeometryModule.getCircumference(5);

Despite their similarity to static classes, module are represented quite differently in the compiled JavaScript. To see just how different modules and classes are, let’s create a functionally equivalent class with static members and compare the generated JavaScript. First, here’s the class definition:

class CircleGeometry {

static getArea(radius : number) {

return Math.PI * Math.pow(radius, 2);

}

static getCircumference(radius : number) {

return 2 * Math.PI * radius;

}

}

This results in the following JavaScript:

var CircleGeometry = (function () {

function CircleGeometry() {

}

CircleGeometry.getArea = function (radius) {

return Math.PI * Math.pow(radius, 2);

};

CircleGeometry.getCircumference = function (radius) {

return 2 * Math.PI * radius;

};

return CircleGeometry;

})();

The generated JavaScript isn’t particularly complicated; it assigns the result of an immediate function to the name CircleGeometry. The immediate function creates a void function called CircleGeometry (which would serve as a constructor function should we want to create an instance), sets the two functions as properties of that function, and returns it. Now, compare that with what’s generated for the module we defined earlier:

var CircleGeometry;

(function (CircleGeometry) {

function getArea(radius) {

return Math.PI * Math.pow(radius, 2);

}

CircleGeometry.getArea = getArea;

function getCircumference(radius) {

return 2 * Math.PI * radius;

}

CircleGeometry.getCircumference = getCircumference;

})(CircleGeometry || (CircleGeometry = {}));

Again, the generated JavaScript isn’t very complicated but this time, the behavior is much different. Here, a variable called CircleGeometry is declared but not assigned. Next, an immediate function is defined and invoked, passing either an existing CircleGeometry object or a new object (via the assignment operator’s return value) that will expose the functions as properties. Inside the immediate function’s body we can clearly see both the getArea and getCircumference but, rather than being directly assigned to properties on the supplied object, the functions are defined as, well, functions! It’s only after the function definitions that they’re assigned to properties on the supplied object via name. This difference may appear subtle but it means that the immediate function for the module definition is serving as a closure thereby hiding the actual implementations. A script certainly could reassign the properties but it wouldn’t necessarily have access to anything else defined within the closure, particularly not the private members.

Summary

As a super set of JavaScript, TypeScript is by no means a perfect language. It carries JavaScript’s legacy (both good and bad) but I see this as more of a strength than a weakness because the compiler takes advantage of many of JavaScript’s best features and wrangles them into more familiar object oriented and functional patterns. Taken together, the language features described in this article should lead to simpler, more maintainable code which is as important as ever as application complexity continues to increase.